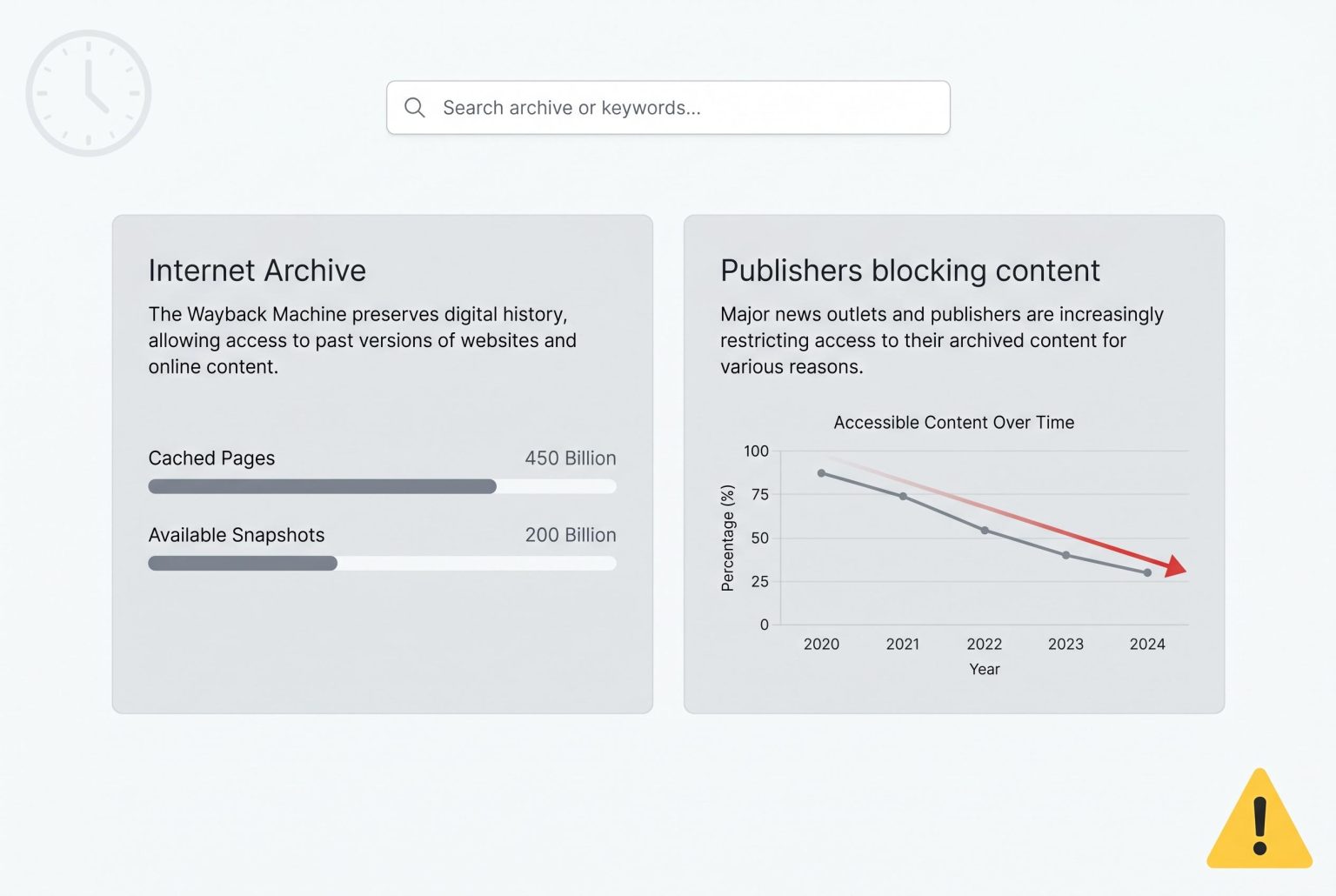

As publishers increasingly restrict their web content from the Internet Archive’s Wayback Machine, fears grow that access to the digital historical record could be compromised amidst rising AI development concerns.

The Internet Archive’s Wayback Machine is facing a growing backlash from publishers worried that archived material can be repurposed by AI firms, a shift that could make parts of the web’s memory harder to reach. Reporting by Nieman Lab says 241 news sites across nine countries now explicitly block at least one of the Internet Archive’s crawling bots, with the largest share coming from USA Today Co., formerly known as Gannett.

The dispute reflects a collision between two once-compatible internet ideals: preserving public records and protecting content from unauthorised scraping. According to Nieman Lab, The New York Times has confirmed it is actively blocking the Archive’s crawlers, while The Guardian has taken a more selective approach, keeping open some access but tightening restrictions around its material. The Internet Archive itself has acknowledged taking steps to limit bulk access to parts of its libraries, after earlier incidents in which AI companies were said to have overloaded its systems.

The scale of the restriction is striking. Nieman Lab said 87 per cent of the sites in its sample that block the Archive are owned by USA Today Co., and that most of the affected publishers use the same two blocks in their robots.txt files. The report also found that 93 per cent of the publishers studied restrict at least two of the four bots associated with the Archive, while some outlets, including Le Monde and its English-language edition, have gone further and blocked three.

For defenders of the Wayback Machine, the concern is that journalists, historians and ordinary readers could lose access to an increasingly fragile digital record. The Internet Archive has spent nearly three decades building what is effectively a public memory bank for the web, and critics of the new blocking wave argue that limiting it may solve a short-term AI problem at the cost of long-term access. As Nieman Lab notes, there is no federal requirement forcing websites to preserve their material, which leaves the Archive as one of the few robust backstops for online history.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

7

Notes:

The article was published on April 28, 2026, and references a report from Nieman Lab dated April 20, 2026. The content appears to be original, with no evidence of prior publication. However, the article is based on a report from Nieman Lab, which may limit its originality. Additionally, the article includes a subscription prompt, indicating it is behind a paywall. This raises concerns about accessibility and potential biases in the content. Given these factors, the freshness score is moderate.

Quotes check

Score:

6

Notes:

The article includes direct quotes from Nieman Lab’s report. However, these quotes cannot be independently verified, as they are not attributed to specific individuals or sources. This lack of verifiability raises concerns about the accuracy and reliability of the information presented.

Source reliability

Score:

5

Notes:

The article is published on Substack, a platform that hosts content from various independent creators. While Substack allows for diverse perspectives, it also means that the content is not subject to traditional editorial oversight. This lack of oversight can lead to potential biases and inaccuracies. Additionally, the article relies heavily on a single source, Nieman Lab, which may limit the breadth and depth of the information presented.

Plausibility check

Score:

7

Notes:

The claims made in the article align with reports from other reputable sources, such as The Week and Forbes, regarding news outlets blocking the Internet Archive’s Wayback Machine due to concerns over AI scraping. However, the article’s reliance on a single source and the lack of independent verification of quotes raise questions about the completeness and accuracy of the information.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article presents information about news outlets blocking the Internet Archive’s Wayback Machine due to AI scraping concerns. However, it relies heavily on a single source, Nieman Lab, and includes direct quotes that cannot be independently verified. The content is behind a paywall, restricting access and independent verification. Additionally, the article is a newsletter commentary, which is a form of opinion or editorial writing, rather than factual reporting. Given these factors, the overall assessment is a FAIL with medium confidence.