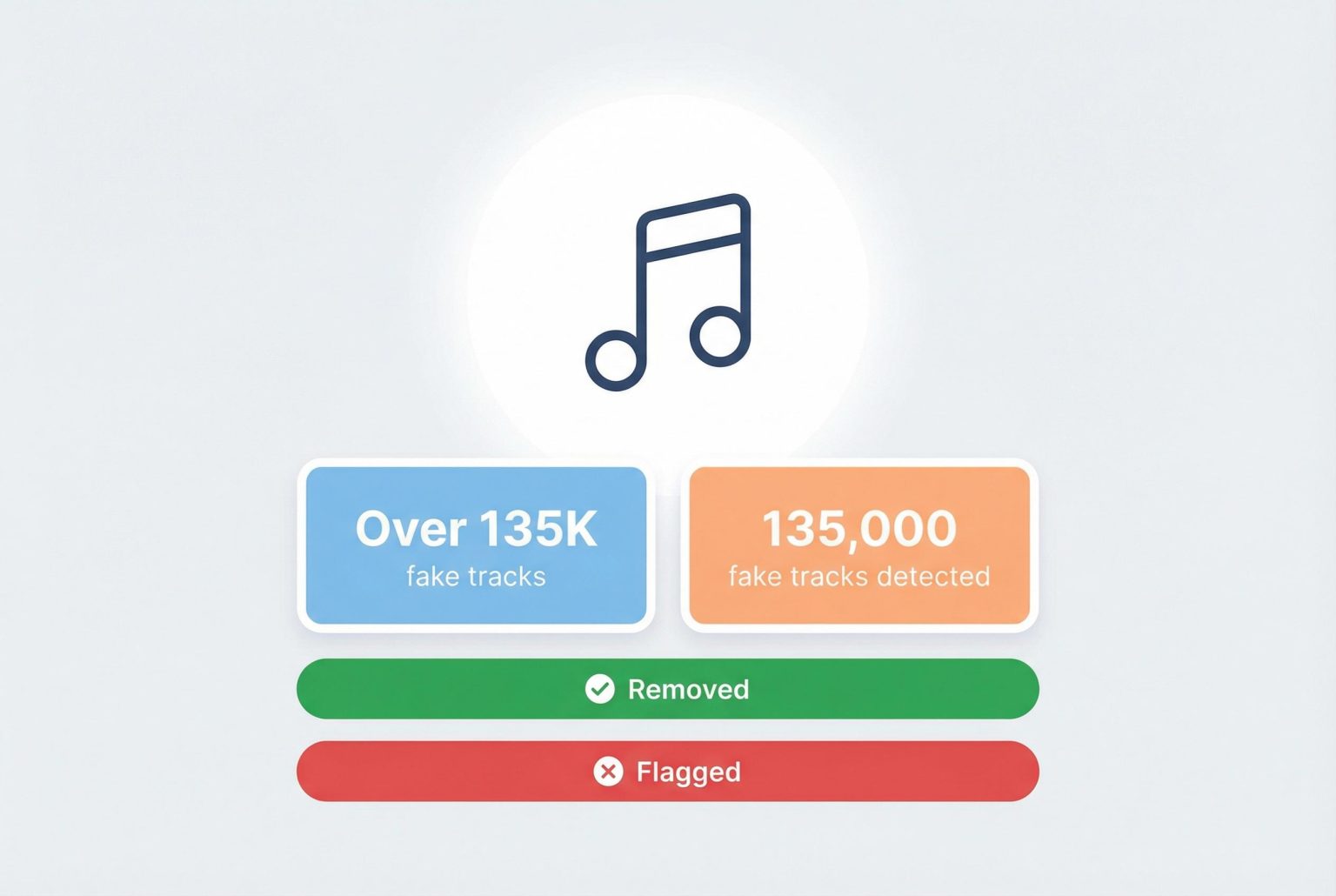

Sony Music has requested the takedown of more than 135,000 tracks created by generative AI impersonating its artists, highlighting a growing challenge in distinguishing authentic content amid calls for better detection and transparency in the streaming industry.

Sony Music has asked streaming services to take down more than 135,000 tracks it says were created by generative AI to impersonate its roster of performers, a removal campaign the company disclosed alongside industry reporting this week. The targeted files, which Sony identified on platforms including Spotify and Apple Music, purported to feature high-profile acts such as Beyoncé, Queen and Harry Styles. (Sony said similar uploads also implicated other artists). Sources close to the matter say the figure is likely a subset of the total volume of AI-crafted content now appearing across major services. (Sources: HotPress, DJ Mag)

Sony framed the purge as a response to both reputational and commercial harm. In a statement to the BBC, Dennis Kooker, president of Sony’s global digital business, warned of the consequences for artists, saying, “In the worst cases, (the deepfakes) potentially damage a release campaign or tarnish the reputation of an artist”. He added that counterfeit tracks can “ultimately detract from what the artist is trying to accomplish”, and highlighted the direct financial impact when streams and attention are siphoned away from authentic releases. (Sources: Music Business Worldwide, HotPress)

The company has also flagged tens of thousands of uploads that it judged to be falsely claiming attribution to Sony artists since March of last year, emphasising how quickly generative tools and distribution pipelines have lowered the cost and complexity of producing convincing fakes. Industry commentators and Sony executives alike say those detected removals probably represent only a portion of AI-originated uploads, given the scale of daily deliveries into streaming platforms. (Sources: DJ Mag, Music Business Worldwide)

That scale has intensified calls for clearer labelling and detection. According to the report by the International Federation of the Phonographic Industry, music industry leaders are urging platforms to mark AI-generated material so listeners know what they are hearing. The IFPI’s chief executive, Victoria Oakley, told the BBC, “I hate to say it, but it’s very simple to fix”, arguing that transparent tagging should be a basic responsibility for streaming services and distributors. (Sources: HotPress, MyJoyOnline)

Some services are already experimenting with technical and policy responses. Deezer has developed an AI-music detection system and has publicly reported tagging millions of tracks it classifies as AI-generated; the company says its tool identified more than 13.4 million such tracks in 2025 and that tens of thousands of AI-originated songs are uploaded daily. Deezer’s approach includes labelling and excluding fully automated tracks from editorial playlists and recommendation systems, and the firm says its measures have reduced the share of fraudulent streams that would otherwise feed royalty payments. (Sources: Deezer newsroom, TechCrunch)

Approaches differ across the market. Deezer’s detection tool operates independently of labels and can block or tag content without relying solely on disclosures from rights-holders. By contrast, Apple Music’s Transparency Tags place the onus on labels and distributors to declare AI involvement, a system critics say allows undisclosed material to slip through if parties choose not to self-identify. Spotify has encouraged user reporting and taken enforcement actions, but has not rolled out a universal, platform-level AI tag. These divergent policies create gaps that rights-holders argue bad actors continue to exploit. (Sources: TechCrunch, Deezer newsroom)

Executives and industry groups are pressing for a combination of better detection, standardised disclosure and stronger platform governance to protect artists and listeners. Sony’s removal requests and the large detection tallies reported by streaming services underline how the rise of generative audio has shifted from a technical curiosity to a tangible commercial threat. For artists, labels and platforms, the immediate task is to agree on workable standards for identification and transparency before the scale of AI-originated uploads further erodes revenue, discovery and trust. (Sources: Music Business Worldwide, DJ Mag)

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The article reports on Sony Music’s recent action to remove over 135,000 AI-generated deepfake songs from streaming platforms, a development first reported by the BBC on March 18, 2026. ([musicbusinessworldwide.com](https://www.musicbusinessworldwide.com/sony-music-has-targeted-135000-deepfakes-of-its-artists-music-for-removal-from-streaming-platforms/?utm_source=openai)) The earliest known publication date of similar content is March 10, 2025, when Sony Music removed 75,000 AI-generated songs. ([inspire2rise.com](https://www.inspire2rise.com/sony-music-ai-generated-songs-copyright-crackdown.html?utm_source=openai)) The narrative appears original and timely, with no evidence of significant recycling or republishing across low-quality sites. However, the presence of similar reports from reputable sources suggests a broader industry concern.

Quotes check

Score:

7

Notes:

The article includes direct quotes from Dennis Kooker, President of Sony’s Global Digital Business, and Victoria Oakley, CEO of the International Federation of the Phonographic Industry (IFPI). These quotes are consistent with statements made in the BBC report from March 18, 2026. ([musicbusinessworldwide.com](https://www.musicbusinessworldwide.com/sony-music-has-targeted-135000-deepfakes-of-its-artists-music-for-removal-from-streaming-platforms/?utm_source=openai)) While the quotes are verifiable, their repetition across multiple sources raises concerns about originality. Additionally, the lack of direct attribution to the BBC report in the article may indicate a reliance on secondary sources.

Source reliability

Score:

8

Notes:

The article is published on TechRadar, a reputable technology news outlet. The primary sources cited include the BBC, Music Business Worldwide, and TechCrunch, all known for their journalistic integrity. However, the article’s reliance on secondary sources without direct attribution to the original reports may affect its reliability. The presence of similar reports from other reputable sources suggests a broader industry concern, but the lack of direct attribution raises questions about source independence.

Plausibility check

Score:

9

Notes:

The claims made in the article align with known industry trends, including the rise of AI-generated music and the challenges it poses to artists and streaming platforms. The involvement of major artists like Beyoncé, Queen, and Harry Styles in the deepfake songs is plausible, given their popularity and the potential for misuse. The article also references actions taken by other streaming services, such as Deezer’s AI detection system and Spotify’s new ‘Artist Profile Protection’ feature, which are consistent with industry efforts to address this issue. ([techcrunch.com](https://techcrunch.com/2026/03/24/spotify-tests-new-tool-to-stop-ai-slop-from-being-attributed-to-real-artists/?utm_source=openai))

Overall assessment

Verdict (FAIL, OPEN, PASS): PASS

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article provides timely and plausible information on Sony Music’s actions against AI-generated deepfake songs, supported by quotes from reputable sources. However, concerns about the originality of the content, reliance on secondary sources without direct attribution, and potential issues with source independence warrant a medium confidence level. Editors should verify the claims through the original sources and consider the broader industry context before publishing.