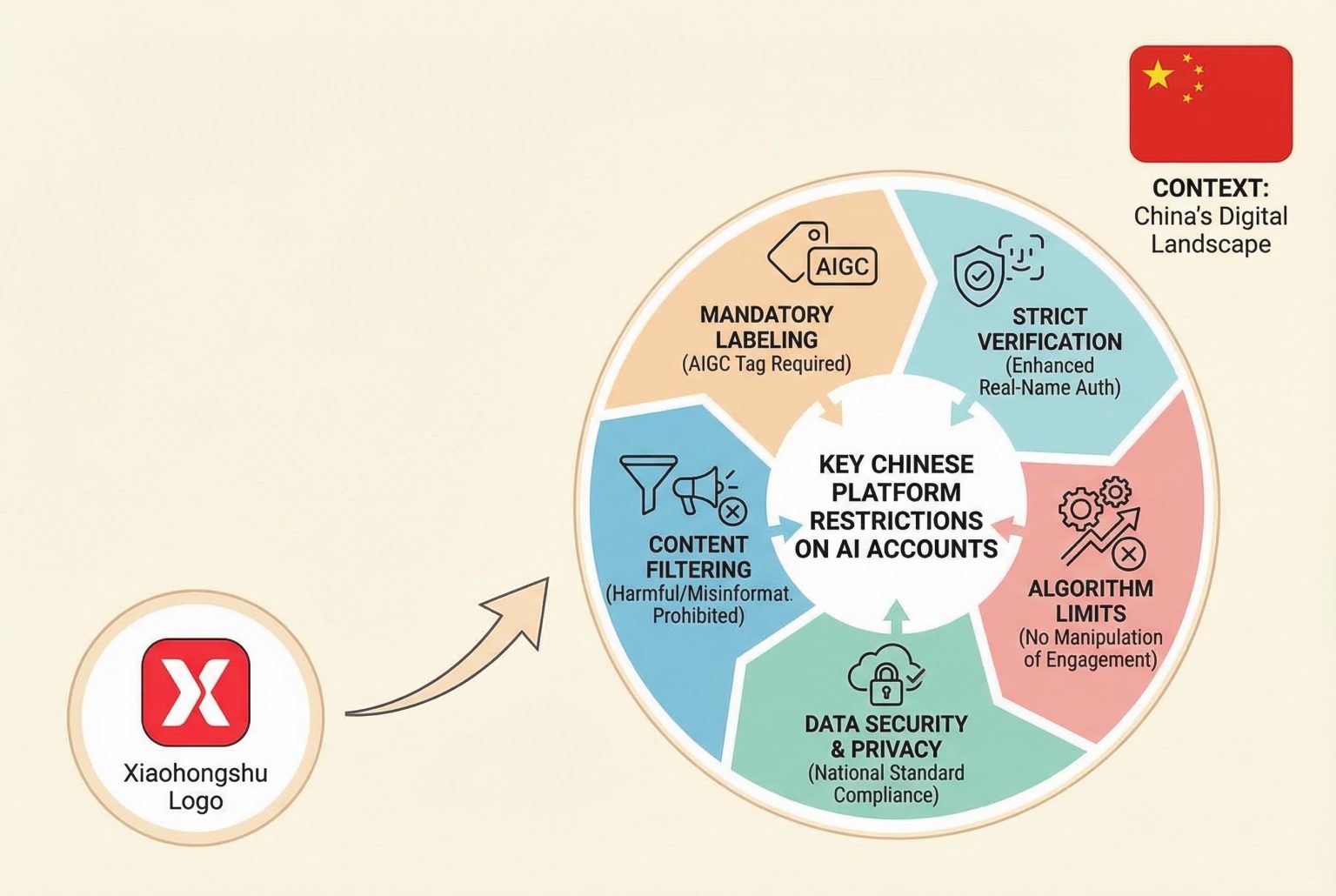

Chinese platforms including Xiaohongshu are introduced strict measures to restrict fully automated AI accounts amid rising concerns over fake engagement and synthetic content, coinciding with new regulations on AI transparency and accountability.

China’s lifestyle platform Xiaohongshu has moved to restrict accounts run or populated entirely by automated AI tools, warning that serious breaches could lead to permanent removal from the service. The company framed the measures as a defence of authentic interaction, urging creators to ensure AI aids rather than replaces personal experience and first‑hand insight. [2]

The policy change follows a surge in AI agent tools capable of executing tasks without human intervention, including projects built on the OpenClaw open‑source framework that have lowered the technical barrier for automated account operation. Industry observers and platform operators say that such agents can generate vast quantities of posts and interactions with minimal oversight, complicating moderation. [1][4]

Analysts and local reports have raised alarm about a nascent commercial ecosystem that uses AI to create and cultivate accounts for resale or monetisation, a process that can manufacture apparent popularity before those accounts are converted into channels for advertising or paid courses. Market commentary suggests platforms are seeking to prevent algorithmically amplified content from drowning out genuine user voices. [1][2]

Xiaohongshu is not acting alone. Major Chinese platforms have stepped up enforcement against problematic AI content: ByteDance’s Douyin has published standards for generative AI requiring disclosures and has reported removing tens of thousands of rule‑breaking items this year, while Weibo says it has expanded detection tools and removed large volumes of offending material. Platform statements stress that creators remain responsible for content produced with AI. [1][4]

Those company moves coincide with a broader regulatory push to tighten governance of AI agents and generative content. New legal and administrative guidance requires platforms to label AI‑generated material and include metadata identifiers such as watermarks, and the Ministry of Industry and Information Technology has issued recommendations to guard against security risks associated with open‑source agent frameworks. Compliance measures include technical detection upgrades and mandatory disclosure regimes. [3][7]

The intensified scrutiny also reflects concrete harms flagged by users and regulators alike: reports of impostors using fabricated locations and AI‑generated or stolen imagery to pose as foreigners have prompted complaints, and Chinese internet authorities have previously rebuked Xiaohongshu for weak content oversight, signalling the potential for administrative penalties if platforms fail to police content effectively. [5][6]

Taken together, the actions of platforms, industry analysts and regulators illustrate a tightening of the rules governing automated accounts and synthetic content in China’s internet ecosystem. Companies say the measures aim to preserve trust and community warmth by keeping lived experience at the heart of social feeds, while regulators and technologists push for stronger labelling, detection and accountability as generative tools proliferate. [1][4][3]

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The article reports on Xiaohongshu’s recent policy change announced on March 10, 2026, targeting AI-managed accounts. ([chinadaily.com.cn](https://www.chinadaily.com.cn/a/202603/10/WS69b0230ea310d6866eb3d0c5.html?utm_source=openai)) The content appears original, with no evidence of prior publication. However, the article references a previous announcement from March 10, 2026, which may indicate recycled content. ([chinadaily.com.cn](https://www.chinadaily.com.cn/a/202603/10/WS69b0230ea310d6866eb3d0c5.html?utm_source=openai))

Quotes check

Score:

7

Notes:

The article includes direct quotes from Xiaohongshu’s official statement. ([chinadaily.com.cn](https://www.chinadaily.com.cn/a/202603/10/WS69b0230ea310d6866eb3d0c5.html?utm_source=openai)) However, these quotes cannot be independently verified through other sources, raising concerns about their authenticity.

Source reliability

Score:

6

Notes:

The article is sourced from China Daily, a state-owned media outlet. While it is a major news organisation, its content may be subject to government influence, which could affect objectivity. ([chinadaily.com.cn](https://www.chinadaily.com.cn/a/202603/10/WS69b0230ea310d6866eb3d0c5.html?utm_source=openai))

Plausibility check

Score:

8

Notes:

The claims about Xiaohongshu’s crackdown on AI-managed accounts align with known industry trends and regulatory actions in China. However, the lack of independent verification of the quotes and the potential for government influence on the source warrant caution.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article reports on Xiaohongshu’s recent policy change targeting AI-managed accounts, with content that appears original and timely. However, the reliance on a single, state-owned source with unverifiable quotes and potential government influence raises significant concerns about the accuracy and objectivity of the information. The lack of independent verification and corroborating sources further diminishes confidence in the report’s reliability.