Encyclopædia Britannica has filed a lawsuit against OpenAI, accusing the AI developer of copying nearly 100,000 entries without permission, in a case that could reshape legal standards for AI training practices and fair use.

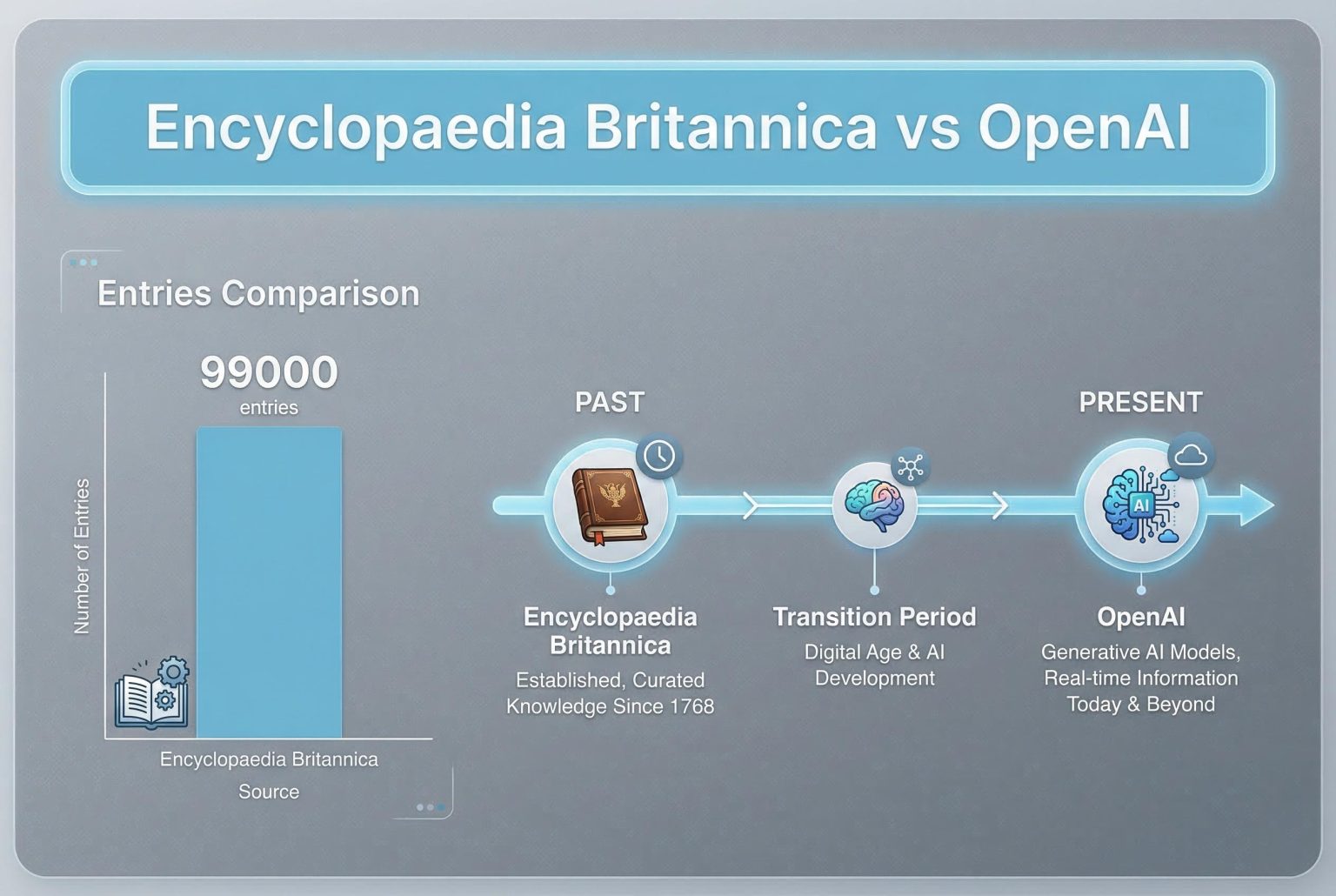

Encyclopædia Britannica and its Merriam‑Webster subsidiary have taken OpenAI to federal court in Manhattan, accusing the company of copying nearly 100,000 of their entries without permission to train its large language models and claiming the practice has siphoned off readers and tarnished the encyclopaedia’s reputation. According to the complaint, the AI outputs produce summaries that too closely resemble Britannica’s work and therefore undercut visits to its sites. (Sources: Encyclopædia Britannica filings and reporting.)

Industry observers say the case sits at the heart of a broader legal contest over whether AI systems’ outputs are “transformative” enough to qualify as fair use. According to reporting on parallel litigation, plaintiffs argue that when models replicate the distinctive substance or structure of copyrighted works, that use cannot be characterised as transformative and may harm the original creator’s market. The outcome could reshape permissible training practices for commercial AI developers. (Sources: Encyclopædia Britannica filings; coverage of related suits.)

The Britannica suit joins a growing list of high‑profile actions alleging unauthorised ingestion of proprietary material. The New York Times and several regional newspapers secured judicial permission to press copyright claims against OpenAI and Microsoft, contending that generative responses have reproduced reporting verbatim and threatened news business models. Similarly, media outlets including The Intercept, Raw Story and AlterNet have lodged claims alleging large‑scale copying of journalism. These cases underscore shared concerns about downstream economic effects on content producers. (Sources: Encyclopædia Britannica filings; Associated Press coverage.)

Beyond news organisations, companies that compile and curate specialised databases have also sued AI firms. Nielsen’s Gracenote alleges OpenAI misused copyrighted metadata and the unique relational framework that underpins its commercial offerings, while groups of authors have targeted other technology providers over the use of books and other written works. According to reporting, those suits emphasise not only verbatim copying but the appropriation of curated structures and identifiers that embody commercial value. (Sources: Axios; The Guardian.)

Entertainment and creative industries are pursuing comparable claims. Major studios have accused generative image platforms of enabling near‑replication of protected characters, arguing that such capabilities threaten licensing markets and the value of original artistic works. Those actions challenge the extent to which training on large, mixed‑source datasets can be justified under fair use and raise questions about whether current practice preserves or depletes the incentives that sustain creative production. (Sources: The Associated Press; Time.)

Legal experts say the Britannica case could become a bellwether because it focuses on encyclopaedic content long considered core reference material and because the complaint links alleged copying to measurable commercial harm. If a court finds that OpenAI’s use fails the transformative test or that AI outputs supplant the market for the originals, the decision may force companies to alter training datasets, licensing arrangements or the way AI systems present sourced information. Conversely, a ruling for OpenAI would reinforce broader latitude for model builders but would likely invite new debates about attribution, remediation and compensation for rights holders. (Sources: Encyclopædia Britannica filings; Axios; Associated Press.)

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

7

Notes:

The article reports on a recent lawsuit filed by Encyclopædia Britannica against OpenAI, alleging unauthorized use of their content for AI training. Similar lawsuits have been filed by other entities, such as The New York Times and authors, against OpenAI and Microsoft over the use of their content in AI training. ([pbs.org](https://www.pbs.org/newshour/economy/the-new-york-times-sues-openai-and-microsoft-over-the-use-of-its-stories-to-train-chatbots?utm_source=openai))

Quotes check

Score:

6

Notes:

The article includes direct quotes attributed to ‘Encyclopædia Britannica filings and reporting’ and ‘coverage of related suits.’ However, these sources are not specified, making it difficult to verify the authenticity of the quotes. The lack of direct attribution to specific individuals or publications raises concerns about the reliability of the quoted information.

Source reliability

Score:

5

Notes:

The article is sourced from ‘opentools.ai,’ which appears to be a niche publication. The lack of information about the publication’s credibility and editorial standards makes it challenging to assess the reliability of the source. Additionally, the article relies on unspecified filings and reporting, further complicating the verification process.

Plausibility check

Score:

8

Notes:

The claims made in the article align with ongoing legal actions against AI companies for unauthorized use of copyrighted material. Similar lawsuits have been filed by other entities, such as The New York Times and authors, against OpenAI and Microsoft over the use of their content in AI training. ([pbs.org](https://www.pbs.org/newshour/economy/the-new-york-times-sues-openai-and-microsoft-over-the-use-of-its-stories-to-train-chatbots?utm_source=openai)) However, the lack of specific details and verifiable sources in the article raises questions about the accuracy of the reported claims.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article reports on a lawsuit filed by Encyclopædia Britannica against OpenAI over unauthorized use of their content for AI training. However, the lack of specific details, verifiable sources, and direct attribution to reputable publications raises significant concerns about the accuracy and reliability of the information presented. The reliance on unspecified filings and reporting further complicates the verification process, leading to a ‘FAIL’ assessment with medium confidence.