As artificial intelligence reshapes the media landscape, publishers are grappling with how to monetise their content amid contrasting responses from outlets like Le Monde, Wikipedia, and Business Insider, highlighting the complex economic and ethical challenges at stake.

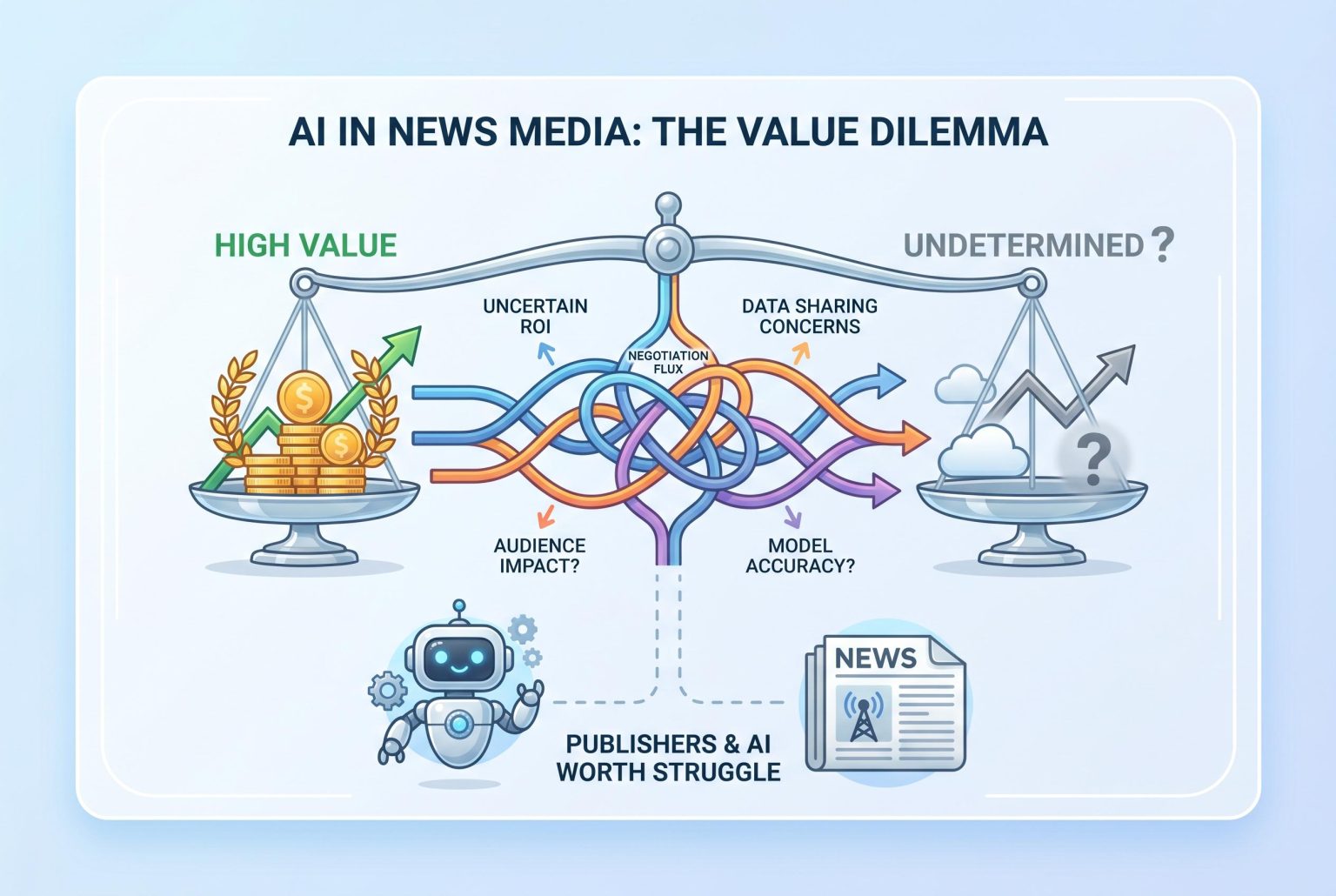

The question publishers are circling is no longer whether to use artificial intelligence in their operations, but what their journalism is actually worth to the companies building AI products around it. That is the argument at the heart of Omar Oakes’ latest column, which frames the issue as a commercial one as much as an editorial one: if media content helps power systems valued in the hundreds of billions, what price should attach to it, and what happens when publishers fail to set that price for themselves?

Three very different responses to that dilemma are now emerging. According to Le Monde, the French newspaper has struck licensing arrangements with OpenAI, Perplexity and Meta, presenting the deals as a way to protect rights, secure compensation and preserve editorial independence as AI platforms expand. Le Monde says the arrangement with Perplexity is non-exclusive and designed to drive readers back to its journalism through direct links, while its later deal with Meta was cast as part of a wider effort to defend content rights and fair revenue distribution. By contrast, Wikipedia has moved in the opposite direction, banning large language models from generating or rewriting articles on its English-language platform, while still allowing limited use for copy-editing and translation under human review. Business Insider, meanwhile, has reportedly taken a more performative approach, announcing a quarterly prize for staff AI use even as its parent company, Axel Springer, has been cutting jobs and publicly declaring itself committed to AI.

Taken together, the three responses underline how differently organisations are valuing the same technological shift. Le Monde appears to see premium journalism as an asset that can be licensed and monetised, but only if the brand remains trusted enough to retain reader traffic. Wikipedia’s editors are treating the site’s curated knowledge base as something to be protected from machine-written contamination. And Insider’s AI prize, as Oakes argues, looks less like a strategy than a sign that some media groups are keen to appear forward-looking before they have worked out what they are defending, or why.

Wikipedia’s move is especially telling because it reflects a deeper anxiety than simple mistrust of AI output. The site’s volunteer editors concluded that machine-generated text frequently conflicts with its standards of accuracy, verifiability and neutrality, and recent reporting says the policy shift followed a vote within the community. Yet the broader challenge may be whether AI threatens Wikipedia more by reading it than by writing for it, since the site’s role as the web’s baseline reference layer depends on being used, not just kept clean. In that sense, the ban is defensive, but not necessarily a long-term answer to the platform’s relevance.

The wider warning for publishers is that delaying the conversation does not avoid the economics. If their content is highly valuable to AI companies, then every licensing deal struck elsewhere risks setting a benchmark they may later struggle to beat. If, on the other hand, their output is largely interchangeable and easily replicated, then AI is exposing a structural weakness that predated the technology. Oakes’ point is that many media businesses are still avoiding that reckoning by counting tools, prizes or adoption rates instead of answering the more uncomfortable question: what is the content worth, and to whom?

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [3]

- Paragraph 2: [2], [3], [4], [5], [6], [7]

- Paragraph 3: [2], [3], [7]

- Paragraph 4: [4], [5], [6], [7]

- Paragraph 5: [2], [3], [4], [5], [6], [7]

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

7

Notes:

The article was published on April 16, 2026. The referenced events, such as Le Monde’s partnerships with OpenAI and Perplexity, occurred in May and December 2025, respectively. The Wikipedia policy change regarding AI-generated content was reported in March 2026. These events are recent, but the article’s timeliness is borderline, as it discusses events that occurred up to four months prior. Additionally, the article appears to be a commentary piece, which may not be as time-sensitive as a news report. The source, More About Advertising, is a niche publication focusing on advertising and media, which may limit its reach and impact.

Quotes check

Score:

6

Notes:

The article includes direct quotes from Le Monde’s announcements and Wikipedia’s policy change. However, these quotes are not independently verified within the article. The absence of direct links to the original sources raises concerns about the accuracy and context of the quotes. Without access to the original statements, it’s challenging to assess the reliability of the quotes.

Source reliability

Score:

5

Notes:

More About Advertising is a niche publication focusing on advertising and media. While it provides industry-specific insights, its limited reach and focus may affect the comprehensiveness and objectivity of its reporting. The article’s reliance on a single source for information about Le Monde’s partnerships and Wikipedia’s policy change without cross-referencing with other reputable outlets raises concerns about the reliability of the information presented.

Plausibility check

Score:

7

Notes:

The article discusses Le Monde’s partnerships with AI companies and Wikipedia’s policy change regarding AI-generated content, which are plausible and have been reported by other sources. However, the article’s analysis and conclusions are based on a single source, More About Advertising, without corroboration from other reputable outlets. This lack of independent verification raises questions about the accuracy and objectivity of the analysis.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article presents a commentary on recent developments in the media and AI sectors, referencing Le Monde’s partnerships and Wikipedia’s policy change. However, it relies on a single, niche source without independent verification or corroboration from other reputable outlets. The absence of direct links to original statements and the lack of cross-referencing with other sources raise concerns about the accuracy and objectivity of the information presented. Given these issues, the content does not meet the necessary standards for publication.