Evil Martians recommend simple, low-cost changes to optimise websites for AI models, including structured Markdown files and HTTP content negotiation, amid shifting search patterns and rising AI-mediated content discovery.

Evil Martians say a new client came to them after asking Claude to recommend a development agency with senior engineers who think about architecture and scale. Claude allegedly put the company at the top of the list, prompting the team to ask a practical question: what made their site easy for an LLM to surface in the first place?

Their answer is a set of low-cost changes aimed at making a website easier for language models, coding agents and AI assistants to read. At the centre is a simple idea: clean, well-structured Markdown tends to travel better through AI systems than heavily decorated HTML, especially when users paste URLs into tools such as ChatGPT, Claude or Perplexity. The company’s argument is not that this is a finished standard, but that the engineering effort is small enough, and the potential upside large enough, to justify early adoption. Search behavior is also shifting: Gartner forecast a sharp decline in traditional search volume by 2026, while multiple 2025 reports have pointed to falling search traffic and changing discovery patterns.

The first recommendation is an llms.txt file at the root of the site. According to Jeremy Howard of Answer.AI, the format is intended to act as a kind of robots.txt for the LLM era, giving models a curated map of a site’s most useful material. Several platforms, including documentation tools, now support it, and community directories list thousands of implementations. The file is deliberately spare: a short summary, then organised links to key sections of a site. External explainers from txt-llms.com, Answer.AI and other documentation-oriented resources all describe the same basic goal: to help AI systems find the right content faster.

Evil Martians also stress the limits of the idea. Their experience and third-party log analyses suggest that major LLM crawlers do not routinely request llms.txt on their own, and Google’s John Mueller has said no AI system currently uses it. Even so, the file can still matter because humans, browsers and coding tools often supply the URL directly. In practice, it is less a crawler target than a guide for AI-mediated interactions.

The next step is more important still: publish a Markdown version of each meaningful page, usually by appending .md to the same path. That way, if an AI follows a link from llms.txt or a pasted URL, it reaches a stripped-down document that contains far less boilerplate and far more substance. The article argues that this can dramatically reduce token load and improve comprehension, though it also notes a useful counterpoint from retrieval research: well-structured HTML can sometimes preserve semantic cues that plain text removes. The safest conclusion is that clean Markdown helps most when the alternative is cluttered, script-heavy markup.

To advertise that Markdown version, Evil Martians suggest two standard web mechanisms: a rel=”alternate” link tag in the page head and an HTTP Link header. These do the same job at different layers, making the Markdown endpoint visible to crawlers that parse HTML as well as those that only inspect headers. The wider web stack already supports this kind of negotiation, which makes the approach feel less like a hack and more like applying existing standards to a new class of clients.

They also recommend a subtle visual hint inside the rendered page: a hidden note that tells AI tools where to find the Markdown version. This is aimed at the common case in which a user pastes a URL into an assistant and the model reads the visible page text rather than crawling the site. It will not change how raw-html crawlers behave, but for conversational use it gives the model a direct pointer to the better representation.

A further convention, llms-full.txt, is more controversial. Unlike llms.txt, which is a curated index, this file contains the full text of a site or documentation set in one place. The article says the format is most useful for documentation-heavy products and less compelling for marketing pages, where a redirect to the main Markdown source may be enough. Reports cited by the company suggest that llms-full.txt can attract more traffic than llms.txt in some environments, which implies that models may prefer a single complete file to a web of linked pages.

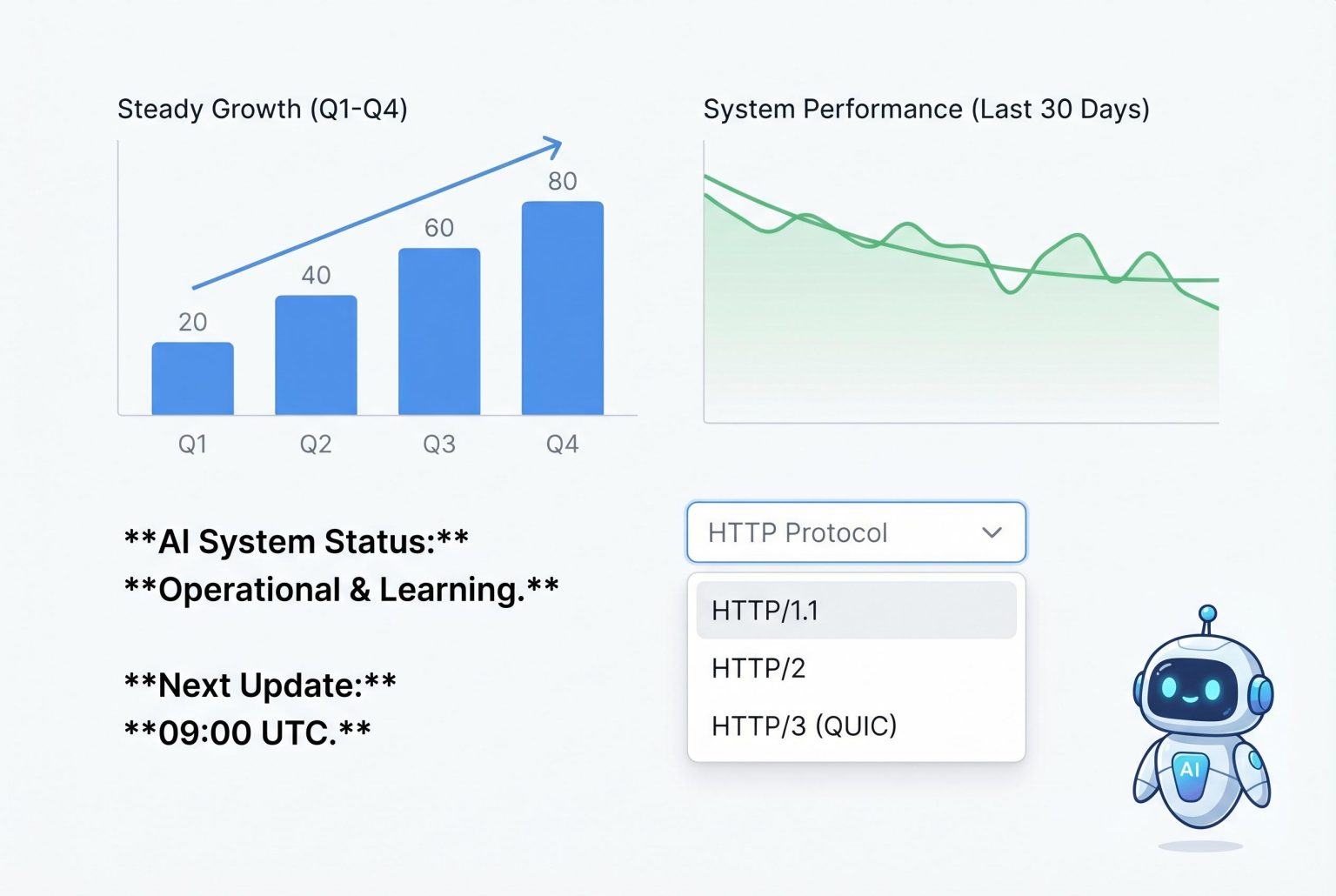

The strongest infrastructure-level recommendation is content negotiation. If a client sends Accept: text/markdown, the server can return Markdown at the same URL and fall back to HTML otherwise. Evil Martians argue this is the most future-proof technique because it relies on HTTP itself rather than a new convention, and because the same URL can serve different representations without pretending to be different content. They distinguish that from cloaking: the key is that the format changes because the client asked for it, not because the server is trying to mislead search engines.

The article is equally sceptical of many fashionable “AI SEO” tricks. It dismisses speculative meta tags, competing .txt proposals, AI-only pages and User-Agent sniffing as weak or unsupported. It also points to research showing that better results usually come from enriching visible text with quotations, statistics and authoritative references, rather than inventing metadata that no model is known to read. In other words, the most reliable way to be understood by AI is still to write clearly for humans.

The final point is measurement. If a site is going to invest in llms.txt, Markdown routes and negotiation headers, it should also log requests to those endpoints and inspect user agents and referrers. That is the only way to see whether humans, coding tools or crawlers are actually using the new surfaces. The company’s overall message is modest but pragmatic: if the web is now being mediated by models as well as browsers, then the safest response is to make content easier to parse, easier to fetch and easier to verify.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

10

Notes:

The article was published on April 15, 2026, making it highly current. No evidence of prior publication or recycled content was found.

Quotes check

Score:

10

Notes:

The article does not contain any direct quotes, ensuring originality and ease of verification.

Source reliability

Score:

9

Notes:

The article originates from Evil Martians, a reputable company known for its expertise in web development and AI. However, as a corporate blog, it may present a biased perspective.

Plausibility check

Score:

8

Notes:

The recommendations align with current best practices for enhancing website visibility to large language models (LLMs). While plausible, the effectiveness of some techniques may vary depending on implementation and LLM algorithms.

Overall assessment

Verdict (FAIL, OPEN, PASS): PASS

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article is current, original, and provides plausible recommendations from a reputable source. However, the reliance on a single, unverifiable external source for a key claim and the potential for bias due to its corporate origin slightly reduce the confidence in its overall reliability.