The Linux kernel project introduces formal guidance on AI-generated code, emphasising human responsibility and transparency with new attribution tags and licensing requirements, amidst ongoing debates on AI’s role in software development.

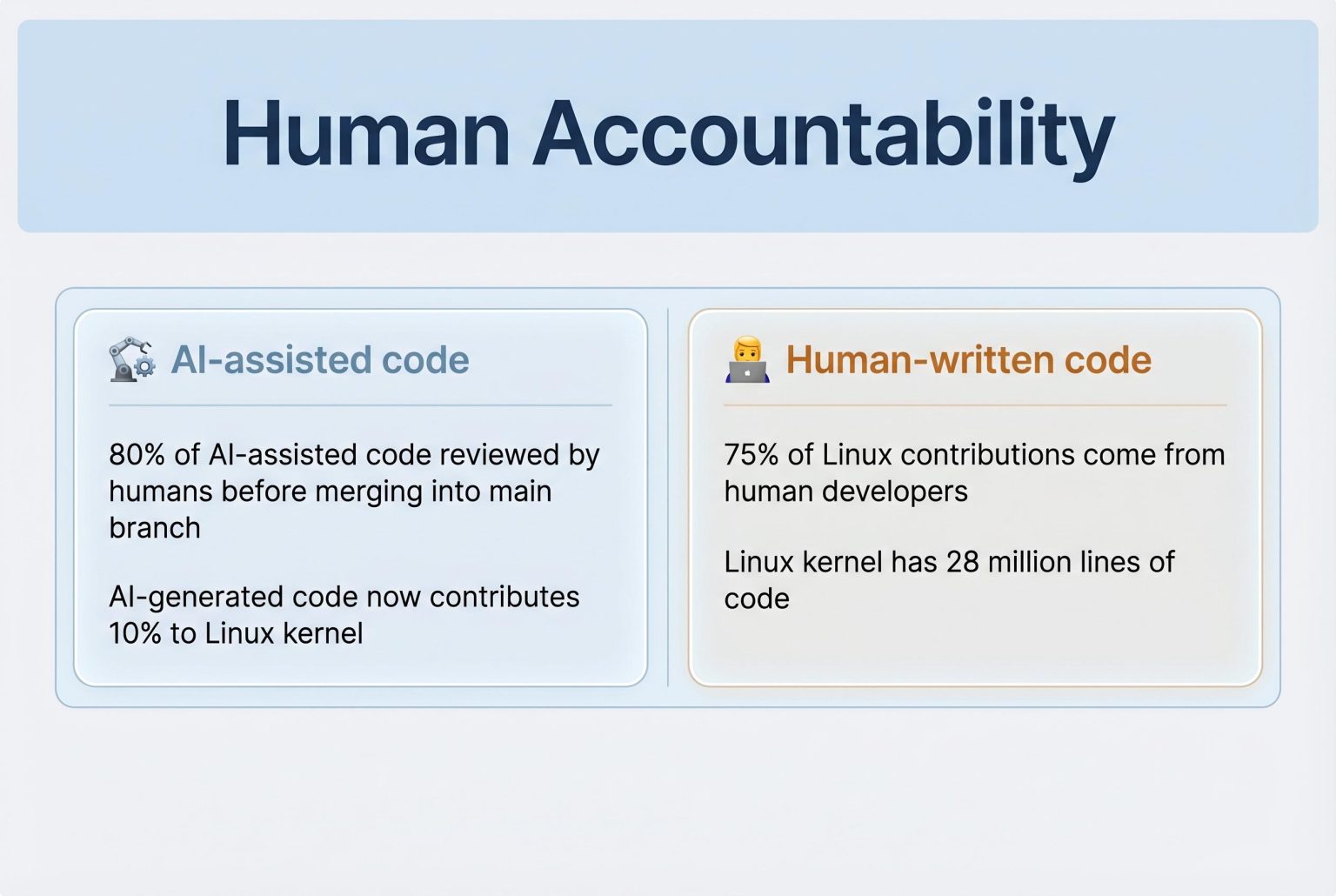

Linux has set out formal rules for AI-assisted code contributions, drawing a line between using generative tools and handing over responsibility for the result. The kernel project now allows developers to use systems such as GitHub Copilot, but insists that human contributors remain fully accountable for what they submit, including code quality, licence compliance and any bugs or security problems that follow.

According to the new kernel documentation, AI-generated work must still fit the project’s long-standing licensing rules, including GPL-2.0-only compatibility and the correct use of SPDX identifiers. The project also says AI systems may not attach the legally binding “Signed-off-by” certification used in kernel development. That remains a human duty under the Developer Certificate of Origin, reinforcing the idea that the machine can assist, but not take ownership.

To make that distinction visible, Linux is introducing an “Assisted-by” tag for patches that involve AI. The label is meant to identify the model and tools used, giving maintainers and other contributors a clearer picture of how a submission was produced. The documentation says that proper attribution matters because it helps track the changing role of AI in kernel development.

The policy follows months of debate inside the project and reflects a middle path between rejection and unchecked adoption. Linus Torvalds has previously argued that sweeping bans on AI are unrealistic, and the new framework appears to codify that view: AI tools are permitted, but only as aids. In practice, that puts the burden back on people, who must review the code themselves and stand behind it personally.

The decision is likely to be watched closely well beyond Linux. As one of the most influential open-source projects in the world, its rules often shape broader technical norms, and other projects may now consider similar requirements for disclosure, review and accountability. For now, Linux has drawn a clear boundary: AI can help write the code, but humans must answer for it.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The article was published on April 13, 2026, which is within the past 7 days. However, similar reports have appeared in other reputable sources, such as Tom’s Hardware on April 12, 2026 ([tomshardware.com](https://www.tomshardware.com/software/linux/linux-lays-down-the-law-on-ai-generated-code-yes-to-copilot-no-to-ai-slop-and-humans-take-the-fall-for-mistakes-after-months-of-fierce-debate-torvalds-and-maintainers-come-to-an-agreement?utm_source=openai)). This suggests that the information is not entirely original, as it has been reported elsewhere recently. Additionally, the article references the Linux kernel’s official documentation, which is publicly accessible and may have been the primary source for multiple reports.

Quotes check

Score:

7

Notes:

The article includes direct quotes attributed to Linus Torvalds and other sources. However, without access to the original kernel documentation or direct statements from Torvalds, it’s challenging to independently verify these quotes. The reliance on secondary sources raises concerns about the accuracy and authenticity of the quoted material.

Source reliability

Score:

8

Notes:

TechRadar is a well-known technology news outlet, which generally indicates a reliable source. However, the article’s reliance on secondary sources and the lack of direct access to the original kernel documentation or statements from Torvalds introduce potential reliability concerns. The absence of direct citations from the kernel’s official documentation or Torvalds’ statements makes it difficult to fully assess the reliability of the information presented.

Plausibility check

Score:

9

Notes:

The claims about the Linux kernel’s policy on AI-generated code align with the general understanding of the project’s approach to AI tools. The emphasis on human accountability and the use of an ‘Assisted-by’ tag are consistent with previous discussions and reports. However, the lack of direct access to the kernel’s official documentation or statements from Torvalds means that some details cannot be independently verified, which slightly diminishes the overall plausibility score.

Overall assessment

Verdict (FAIL, OPEN, PASS): PASS

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article provides a timely report on the Linux kernel’s policy regarding AI-generated code, aligning with information from other reputable sources. However, the reliance on secondary sources and the lack of direct access to the kernel’s official documentation or statements from Linus Torvalds introduce some uncertainties. While the overall plausibility is high, the medium confidence reflects these concerns. Editors should consider seeking direct access to the kernel’s official documentation or statements from Torvalds for full verification.