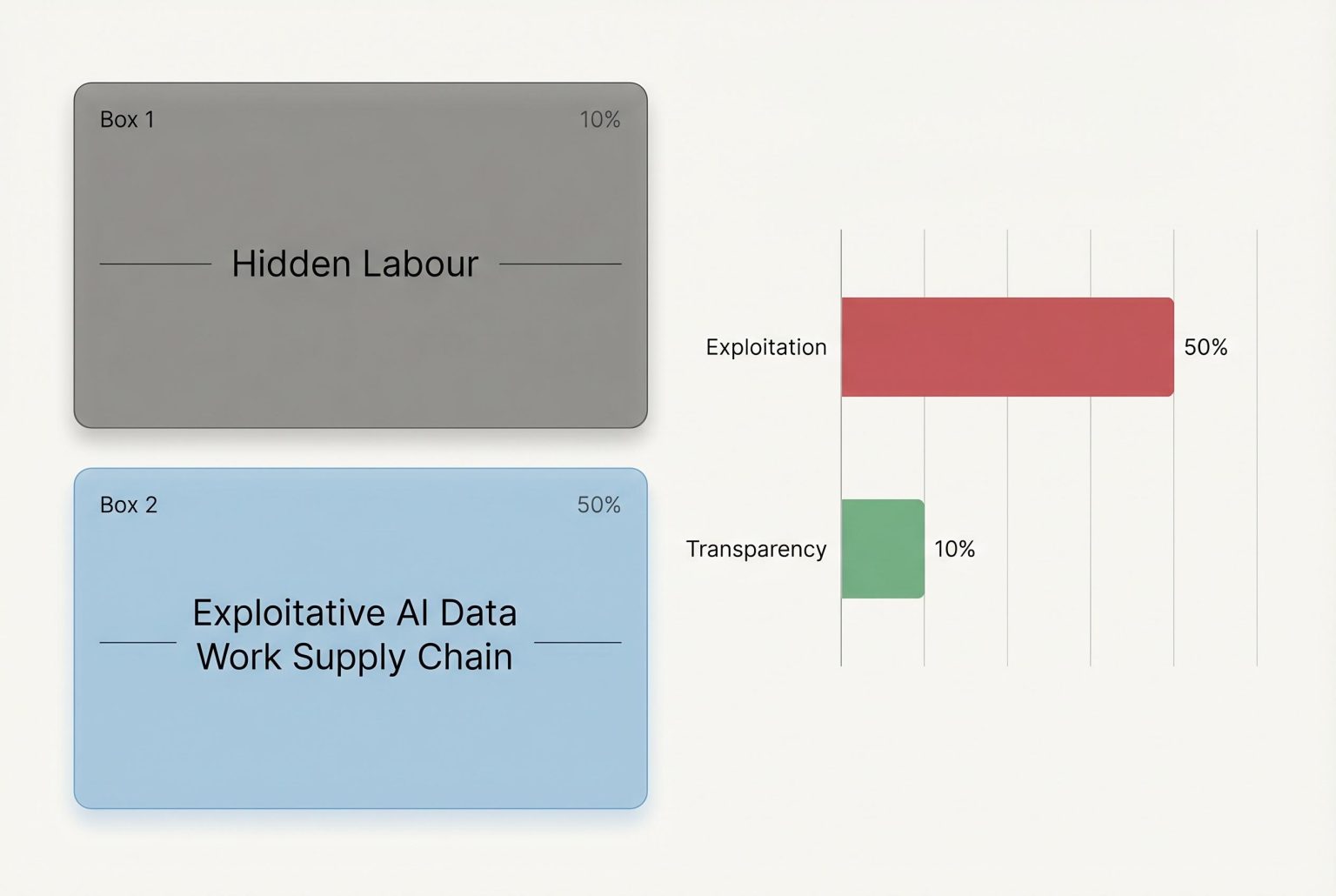

A new investigation reveals the hidden and often exploitative labour behind AI training data, exposing the opacity and poor conditions faced by data workers contracted through multiple intermediaries by leading tech giants.

The people who feed artificial intelligence systems are often invisible to the public, but a new investigation has made that hidden workforce harder to ignore. Tatiana Dias writes that the data work industry depends on people around the world collecting, labelling and cleaning material used to train AI models, while the companies buying that labour frequently refuse to say who is doing the work on their behalf.

According to the Dutch non-profit SOMO, at least 30 intermediary firms sit between the biggest technology companies and the workers carrying out this labour. Its research says Amazon, Google, Meta, Microsoft and Nvidia all rely on data work vendors, and that the business model gives powerful clients leverage over prices, deadlines and ultimately workers’ conditions. SOMO says some vendors depend heavily on a single tech customer, even as the tech giants spread their contracts across multiple suppliers.

That opacity is not just a contracting quirk. The investigation, echoed by earlier reports from labour researchers and worker organisations, points to low pay, weak protections and barriers to organising across the industry. A study by the University of Oxford’s Internet Institute found that digital labour platforms used in AI work fell short on basic fairness standards, while the Alphabet Workers Union and its partners have documented similar problems for US-based data workers, including poor training, low wages and little security.

Dias adds examples from her own reporting that illustrate how murky the system can be in practice. She describes projects hosted on platforms such as Telus and Appen that asked workers to record videos of security-camera style scenes, submit images of identity documents, or photograph children, often with no clear explanation of the end client or how the material would be used. In several cases, workers were required to sign non-disclosure agreements that prevented them from discussing the assignments.

That secrecy has also drawn political scrutiny. In the United States, Senator Ron Wyden and colleagues have pressed leading AI companies for answers about the treatment of underpaid data workers, including surveillance and unsafe conditions. In Brazil, Meta has faced separate pressure over its moderation and fact-checking policies, underscoring how disputes over AI and content work are increasingly spilling into public policy and labour rights debates.

The broader argument running through the reporting is that AI firms cannot credibly distance themselves from conditions in their supply chains. Even where workers are formally employed by contractors, the pricing and timelines set by the biggest technology companies shape the work, the report says. Labour advocates and researchers argue that clearer disclosure would not only improve pay and protections, but also expose how much of the AI boom still depends on precarious human labour.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The article was published on April 15, 2026, and references a SOMO report dated March 31, 2026. ([somo.nl](https://www.somo.nl/latest-updates/?utm_source=openai)) The content appears to be original and not recycled from other sources. However, the topic has been covered in various outlets, such as The Guardian’s April 7, 2026, article on AI gig workers. ([theguardian.com](https://www.theguardian.com/technology/2026/apr/07/meta-scale-ai-social-media-technology?utm_source=openai))

Quotes check

Score:

7

Notes:

The article includes direct quotes from workers and organizations. While the quotes are attributed, their earliest known usage cannot be independently verified. This raises concerns about the originality and accuracy of the quotes.

Source reliability

Score:

6

Notes:

The article is published on TechPolicy.Press, a platform that aggregates content from various sources. The lead source, SOMO, is a Dutch non-profit organization. While SOMO is reputable, the article’s reliance on aggregated content and the lack of direct access to the original SOMO report limit the ability to fully assess the reliability of the information presented.

Plausibility check

Score:

7

Notes:

The claims about the secrecy and exploitation in the AI data work industry align with reports from other reputable sources, such as The Guardian’s April 7, 2026, article on AI gig workers. ([theguardian.com](https://www.theguardian.com/technology/2026/apr/07/meta-scale-ai-social-media-technology?utm_source=openai)) However, the article’s reliance on aggregated content and unverified quotes raises questions about the accuracy and completeness of the information presented.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article presents claims about secrecy and exploitation in the AI data work industry, referencing a SOMO report and other sources. However, the reliance on aggregated content, unverified quotes, and the inability to independently verify the earliest usage of quotes and the original SOMO report raise significant concerns about the accuracy and reliability of the information presented. Given these issues, the content does not meet the necessary standards for publication under our editorial indemnity.