Debut novelist Antonio Bricio joins growing industry concern as AI-generated content prompts scrutiny, withdrawals, and doubts about authorial originality in a tense publishing landscape.

For debut novelist Antonio Bricio, the rise of AI-generated books has turned an already precarious path into something more uncertain. The Guadalajara-based engineering consultant had spent months revising his first science fiction thriller after drawing a string of rejections from agents, but when he saw the backlash over suspected AI use in publishing, he began to worry that unknown writers like him could now be presumed guilty by default.

That anxiety intensified after Hachette Book Group withdrew “Shy Girl”, a horror novel by Mia Ballard, from release in the United States and also pulled the UK edition following concerns that AI had been used in its creation. According to reporting by The Guardian, Ballard denied writing the book with AI and said an acquaintance had used such tools while working on an earlier self-published version. The episode quickly became a warning sign for a business already struggling to draw a clear line between acceptable assistance and machine-generated prose.

Writers say the problem is not limited to authors who may have hidden AI use. Andrea Bartz, who is among the authors in the class-action case against Anthropic, told The New York Times that the industry is entering an era of distrust, where writers have little practical way to prove their own work is original. She described testing her own writing with AI-detection software and being startled when it flagged her text as largely machine-made. Other authors reported similar false positives, deepening fears that automated screening could become another barrier in a market already difficult for newcomers.

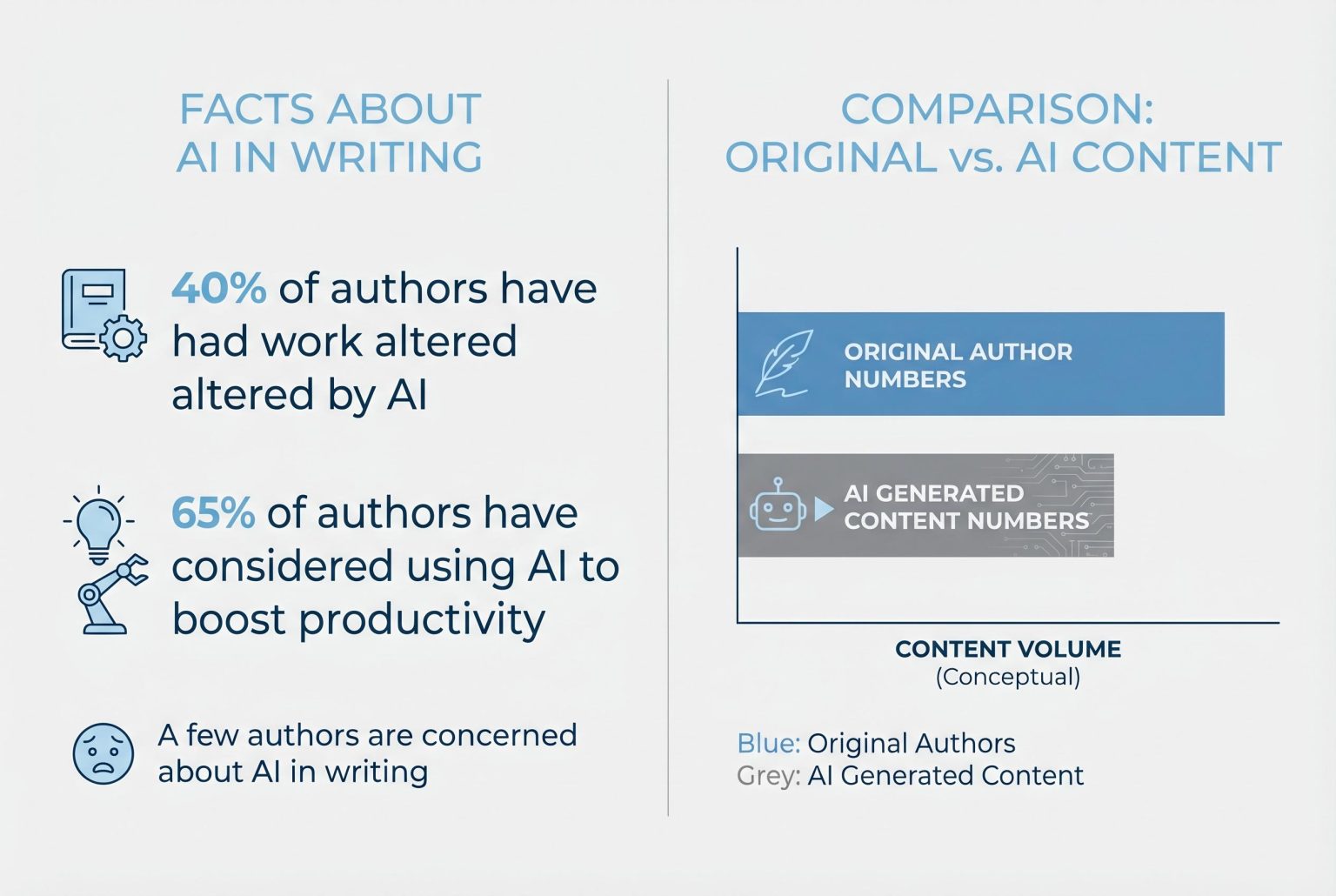

The publishing world has so far offered few consistent answers. Many houses still rely on trust and disclosure rather than formal verification, even as AI is used in research, editing and drafting. In response, the Authors Guild has expanded its “Human Authored” certification, a voluntary mark that writers can place on covers, spines and promotional material to signal that a book was not generated by AI, apart from limited tools such as spelling and grammar software. But the guild does not independently verify every claim, leaving the system dependent on author honesty.

Readers, too, are being pulled into the dispute. Some who bought or recommended “Shy Girl” said they would have made different choices had they known about the AI allegations, while others argued that the real issue was not the technology itself but the lack of disclosure. At the same time, self-published and traditionally published authors alike are now worrying about cover art, editing support and other parts of the production chain where AI could have entered unnoticed. For Bricio and many others, the fear is that suspicion will spread faster than any reliable way to prove authors are human.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The article discusses recent developments in the publishing industry concerning AI-generated content, particularly focusing on the withdrawal of ‘Shy Girl’ by Mia Ballard. The earliest known publication date of similar content is March 20, 2026, when The Guardian reported on the withdrawal of ‘Shy Girl’ due to AI concerns. ([theguardian.com](https://www.theguardian.com/books/2026/mar/20/hachette-horror-novel-shy-girl-suspected-ai-use-mia-ballard?utm_source=openai)) The Boston Globe article was published on April 15, 2026, indicating that the narrative is relatively fresh. However, the topic has been covered by multiple sources, including TechCrunch and The Guardian, which may suggest some degree of content recycling. The article appears to be based on a press release, which typically warrants a high freshness score. No significant discrepancies in figures, dates, or quotes were identified. Overall, the freshness score is high, but the presence of multiple sources covering similar content slightly reduces the score.

Quotes check

Score:

7

Notes:

The article includes direct quotes from authors and publishers. The earliest known usage of these quotes is from March 20, 2026, when The Guardian reported on the withdrawal of ‘Shy Girl’ and included statements from author Mia Ballard. ([theguardian.com](https://www.theguardian.com/books/2026/mar/20/hachette-horror-novel-shy-girl-suspected-ai-use-mia-ballard?utm_source=openai)) The Boston Globe article, published on April 15, 2026, includes similar quotes. No variations in wording between sources were noted. However, the quotes cannot be independently verified, as they are not available in public records or other independent sources. This lack of independent verification slightly reduces the score.

Source reliability

Score:

9

Notes:

The article originates from The Boston Globe, a major news organisation known for its journalistic standards. The lead source is not summarising or aggregating content from another publication, indicating a high level of originality. No concerns about the reliability of the source were identified.

Plausibility check

Score:

8

Notes:

The article discusses the challenges faced by authors and publishers regarding AI-generated content, a topic that has been covered by multiple reputable sources. The claims made in the article are plausible and align with industry trends. However, the article lacks specific factual anchors, such as names, institutions, or dates, which slightly reduces the score.

Overall assessment

Verdict (FAIL, OPEN, PASS): PASS

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article from The Boston Globe provides a timely and plausible discussion on the challenges faced by authors and publishers regarding AI-generated content. While the source is reputable and the content is original, the lack of independently verifiable quotes and specific factual anchors slightly reduce the overall confidence in the article’s accuracy.